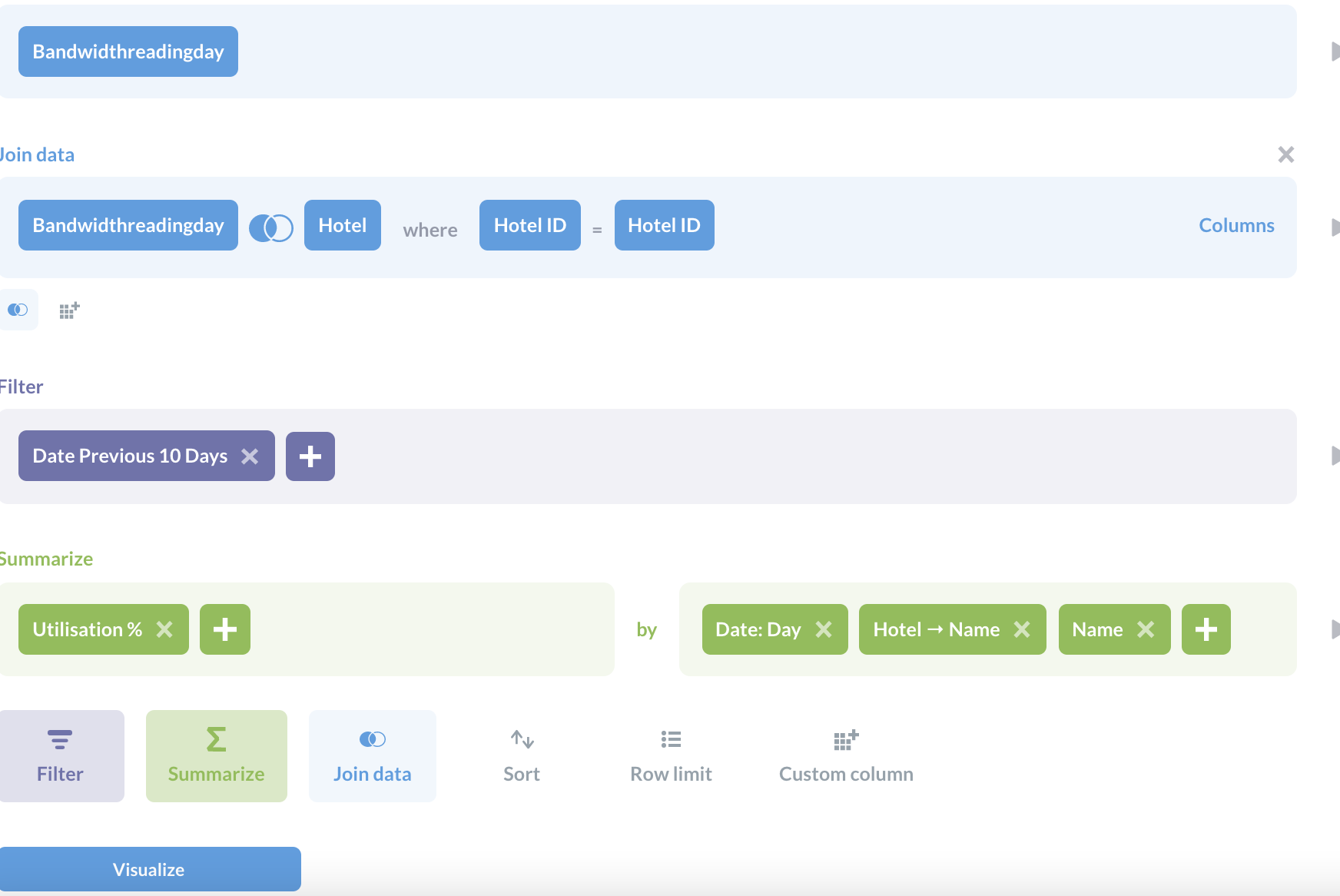

Turnilo doesn’t feature any user or access management. With Turnilo, it is possible to explore data already stored in the connected Druid cluster it cannot be leveraged to set up new data streams or batch uploads. Even simple aggregations like average or min/max values for specific metrics need to be defined here. The Plywood expression language can be used to create custom dimensions and metrics that are not already present in the underlying Druid data source. A powerful YAML file contains all the configuration of available data sources.

Upon connecting to Druid, Turnilo automatically scans for data sources and their specifications, which helps with the initial setup. Dimensions can be split and filtered on, which is similar to a GROUP BY and WHERE clause in SQL. Dimensions and measures correspond to Druid’s dimensions and metrics. In Turnilo terminology, users inspect data cubes, which mirror Druid data sources (or a static file). However, it is possible to load static files and inspect them with Turnilo. It is specifically tailored to Druid and does not connect to other databases as of now. Turnilo is a simple web application written in TypeScript that runs everywhere Node.js 8.x or 10.x (and npm) is installed. Now under the new name of Turnilo it is being developed further, openly available under the Apache License. As Pivot became a commercial product and closed source, the Polish e-commerce platform Allegro adopted a fork of the latest open version. Until November 2016, Pivot, a graphical interface mainly developed by Druid’s co-authors, was available as open source software. For completeness, we also provide a list of current alternatives. In this post, we introduce Turnilo, explain its configuration and usage and share our evaluation outcome. One of the most recent options in this space is Turnilo. Apart from that, specialized frameworks have been developed which are more tightly integrated and more specifically tailored towards the novel paradigms offered by Druid.

MetaBase) also offer connectivity to Druid. This may typically be the turf of „classic“ Business Intelligence platforms like Tableau or PowerBI some of these (like e.g. Instead, the focus of this post is a crucial aspect of interactive exploration and analysis of data which we see in almost every project – namely which (graphical) frontend is used. However, this blog post does not focus around Druid itself-feel free to contact us for a in-depth discussion, or refer to our case study or the official documentation.

We at inovex have successfully used Druid in various projects and gained experience in building productive applications with it-especially regarding the tradeoff between its enhanced capabilities and the complexity of a distributed system with different kinds of services. Being a kind of crossover between a timeseries database, a search index and an analytical / OLAP database, Druid enables large-scale analytics on streaming data among others at AirBnB, Lyft and Criteo.

One possible technical solution to address these needs is Apache Druid, a highly scalable distributed data store, optimized for event-oriented data and real-time analytics. data streams of various formats and (2) the requirement to perform complex analytic slice-and-dice queries on recent and historic datasets. Two common trends across different business areas are (1) a growing amount of data arriving with high delivery speed, i.e. We frequently help our customers implement data platforms on a grand scale: as a backend for user-facing applications, for business analytics or data science and machine learning projects.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed